NEW YORK — Manipulated videos are becoming increasingly sophisticated, but the conversation about how newsrooms should report on them is still relatively new. What are the different ways a video can be manipulated? What descriptions work effectively in headlines? Research shows that debunking misinformation in a way that actually sticks with readers and doesn’t solidify falsehoods is a finer art than we’d hope for.

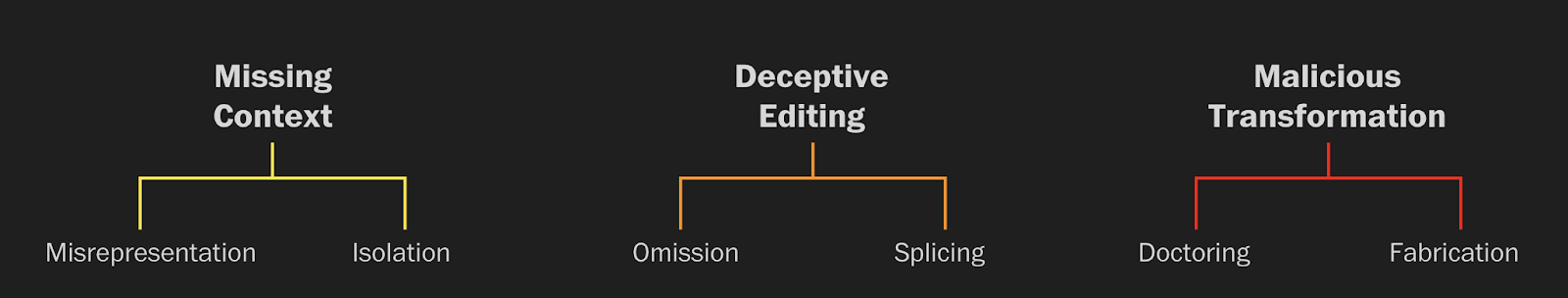

This week, The Washington Post released its own guide to categorising manipulated video. The guide lays out three ways videos are altered, separated into six types:

(Image: Washington Post)

We spoke with Glenn Kessler, the editor of the newspaper’s fact-checking column, The Fact Checker, to learn how the Post developed the guide and how it can be used by other newsrooms and the general public.

First Draft: What prompted the video categorisation project at The Washington Post?

Glenn Kessler: We’ve seen an explosion of videos that are either being deliberately misrepresented, or they’re being edited in some way to change how people view what has happened, or you see things that go all the way to deepfakes. Video is the mode of communication of the future. In the last two years we’ve expanded [The Fact Checker] to video fact checks. They get five times as many views as our text fact checks. That’s an indication of how many more people get their information through video than they do from print. So we want to try to get ahead of this problem and help people gain a common understanding. This election season is going to be pretty rocky. It’s just the beginning.

What were the challenges you faced in coming up with this typology?

The concept that I have in mind is like branches of a tree. Each of these buckets is a branch, and these branches can grow over time, depending on what happens in the video landscape. You can see the three branches are very distinct [Missing Context; Deceptive Editing; Malicious Transformation]. It involved a lot of work with members of our video team, particularly Nadine Ajaka and Elyse Samuels, who spent a lot of time looking at manipulated videos and trying to find the best possible examples to illustrate these concepts.

“Video is the mode of communication of the future.”

Did you have any trouble narrowing all the possible ways one could manipulate video to these six?

It was about a five or six-month process. We tried to go at it very organically. We looked at lots of videos, we thought about the kinds of videos we have fact checked, and these were the categories we came up with. It also became a matter of trying to come up with labels that people could instantly understand.

Will you be labeling your videos using this typology?

Yes. And we want others to do this as well. It doesn’t have to necessarily mention The Fact Checker, but the idea is that there’s a common language that everyone could use to label video.

Did you do any testing of these labels on audiences? The specific label that comes to mind is “doctored.” Do you think audiences know what doctored means?

I hope so! We didn’t do market testing or anything like that. We did have The Washington Post copy desk, which is among the best in the business, go over the descriptions and phrases very carefully, and we did a lot of work with them to get it tight.

At the end of this five-month process, were you surprised by the number of types you came up with?

No, we weren’t necessarily surprised. We’d been seeing a lot of manipulated video. As you can see on the landing page, for each of these categories there were three examples, and virtually all of these examples were things we had fact-checked before. What I’m curious to see is what happens in the future and what we have not anticipated yet.

How will reader submissions fit into this project?

As soon as someone submits something, the team will get an email. And then we’ll look into whether it’s something that is worthy of a fact-check and a label, and whether or not it gives us insight into something that is a new category.

“What I’m curious to see is what happens in the future and what we have not anticipated yet.”

So the hope is that this becomes a universal language and other newsrooms adopt the same labels?

Yes. Other newsrooms, other fact checkers, and also Youtube, Google, Facebook. We want to start a necessary conversation, and we’re laying down the first marker, but obviously it can evolve and change according to the feedback we get and the needs of the moment.

What is the necessary conversation you’re hoping to start?

The goal is to have a common language, so that it’s easy for people to say, “Ah, this video is an example of something that’s deceptively edited”, or “this is an example of omission”, and everyone would understand what that means. That way you can more quickly identify, label and diminish the impact of manipulated video. Right now there isn’t that common language and everyone talks about it differently. Sometimes it takes a while to figure out what is actually going on in that video or how that video is being presented.

“Right now there isn’t that common language and everyone talks about it differently.”

Do you think the vocabulary you have come up with is translatable into other languages?

Hopefully, or at least a concept. I don’t know if “isolation” would mean the same in German and in Swahili and in Japanese, but there might be words in those languages that reflect at least the concept. I was just in Cape Town for the annual meeting of [fact-checking conference] Global Fact, and there were 300 people there representing a range of cultures and languages. It’s fascinating for me, as someone who’s been part of fact-checking from the beginning, to see how it has evolved to deal with the needs of each of the individual communities. So for instance, relatively few people use WhatsApp in the United States, but it’s a huge problem in South America, Africa and India. So fact-checking organisations spend a lot of time dealing with things that are going viral on WhatsApp as opposed to, say, Facebook. So, the actual words? I don’t know how they would necessarily translate. But hopefully at least the concepts.

What do you hope Washington Post audiences and other newsrooms will take away from this guide?

It’s really hard to get video out of your head, and the impact is so dramatic. We’re hoping [that] we’re convincing people that [manipulated video] is a serious problem and that people are more rapidly trying to debunk manipulated video as it appears. It’s not an issue of left or right. Because both sides can be victims of this very easily.

This interview has been edited and condensed for clarity and length.

To stay informed, become a First Draft subscriber and follow us on Facebook and Twitter.